- Writing Learning Standards

- Constructing Proficiency Scales

- Designing Assessment Items

- Determining Grades

Constructing Proficiency Scales

BC’s reporting order requires teachers of Grades K-9 to use proficiency scales with four levels: Emerging, Developing, Proficient, and Extending. Teachers of Grades 10-12 may use proficiency scales but must provide letter grades and percentages. Proficiency scales help communicate to students where they are and where they are going in their learning. But many don’t. When constructing these instruments, I keep three qualities in mind…

Descriptive, Positive, Progressive and Additive

Descriptive

BC’s Ministry of Education defines Emerging, Developing, Proficient, and Extending as demonstrating initial, partial, complete, and sophisticated knowledge, respectively. Great. A set of synonyms. It is proficiency scales that describe these depths with respect to specific learning standards; they answer “No, really, what does Emerging, or initial, knowledge of operations with fractions look like?” Populating each category with examples of questions can help students–and teachers–make sense of the descriptors.

Positive

Most scales or rubrics are single-point posing as four. Their authors describe Proficient, that’s it. The text for Proficient is copied and pasted to the Emerging and Developing (or Novice and Apprentice) columns. Then, words such as support, some, and seldom are added. Errors, minor (Developing) and major (Emerging), too. These phrases convey to students how they come up short of Proficient; they do not tell students what they know and can do at the Emerging and Developing levels.

Progressive and Additive

BC’s Ministry of Education uses this phrase to describe profiles of core competencies: “[Profiles] are progressive and additive, and they emphasize the concept of expanding and growing. As students move through the profiles, they maintain and enhance competencies from previous profiles while developing new skills.”

I have borrowed this idea and applied it to content learning standards. It was foreshadowed by the graphic organizer at the end of my previous post: Extending contains Proficient, Proficient contains Developing, and Developing contains Emerging. (Peter Liljedahl calls this backward compatible.) For example, if a student can determine whole number percents of a number (Proficient), then it is assumed that they can also determine benchmark percents (i.e., 50%, 10%) of a number (Emerging). A move from Emerging to Proficient reflects new, more complex, knowledge, not greater independence or fewer mistakes. Students level up against a learning standard.

Emerging and Extending

The meanings of two levels–Emerging to the left and Extending to the right–are open to debate. Emerging is ambiguous, Extending less so. Some interpretations of Extending require rethinking.

Emerging

“Is Emerging a pass?” Some see Emerging as a minimal pass; others interpret “initial understanding” as not yet passing. The MoE equivocates: “Every student needs to find a place on the scale. As such, the Emerging indicator includes both students at the lower end of grade level expectations, as well as those before grade level expectations. […] Students who are not yet passing a given course or learning area can be placed in the Emerging category.” Before teachers can construct proficiency scales that describe Emerging performance, they must land on a meaning of Emerging for themselves. This decision impacts, in turn, the third practice of a standards-based approach, designing assessment items.

Extending

A flawed framing of Extending persists: above and beyond. Above and beyond can refer to a teacher’s expectations. The result: I-know-it-when-I-see-it rubrics. “Wow me!” isn’t descriptive.

Above and beyond can also refer to a student’s grade level. Take a closer look at the MoE’s definition of Extending: “The student demonstrates a sophisticated understanding of the concepts and competencies relevant to the expected learning [emphasis added].” It is Math 6 standards, not Math 8 standards, that set forth the expected learning in Math 6. When reaching a decision about proficiency in relation to a Math 6 outcome, it is unreasonable–and nonsensical–to expect knowledge of Math 8 content.

Characterizing Extending as I can teach others is also problematic. Explaining does not ensure depth; it doesn’t raise a complete understanding of a concept to a sophisticated understanding. Further, I can teach others is not limited to one level. A student may teach others at a basic complexity level. For example, a student demonstrates an initial understanding of add and subtract fractions when they explain how to add proper fractions with the same denominator.

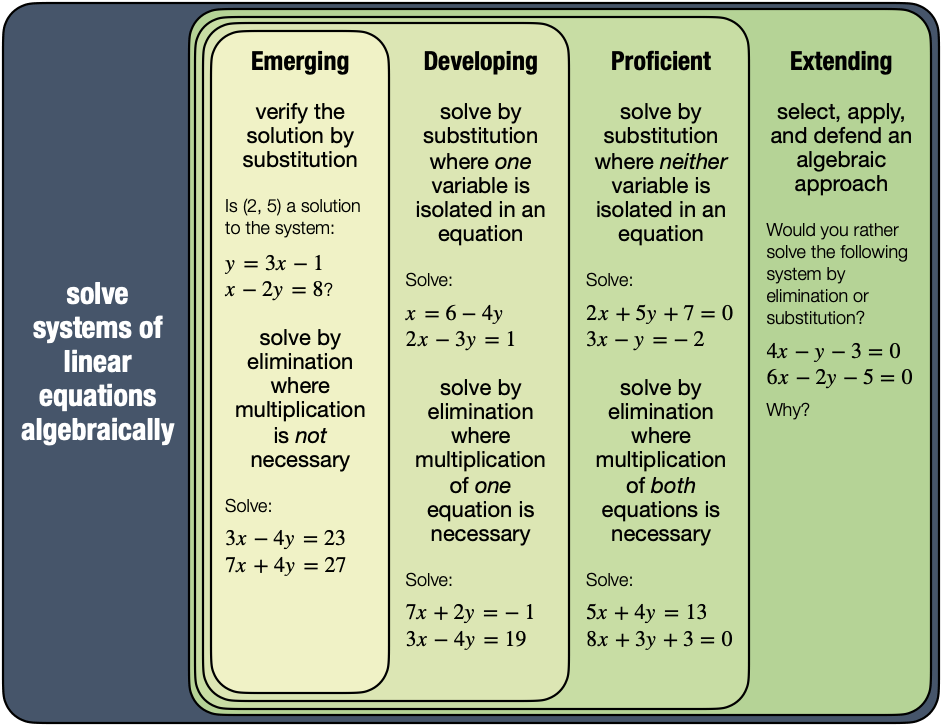

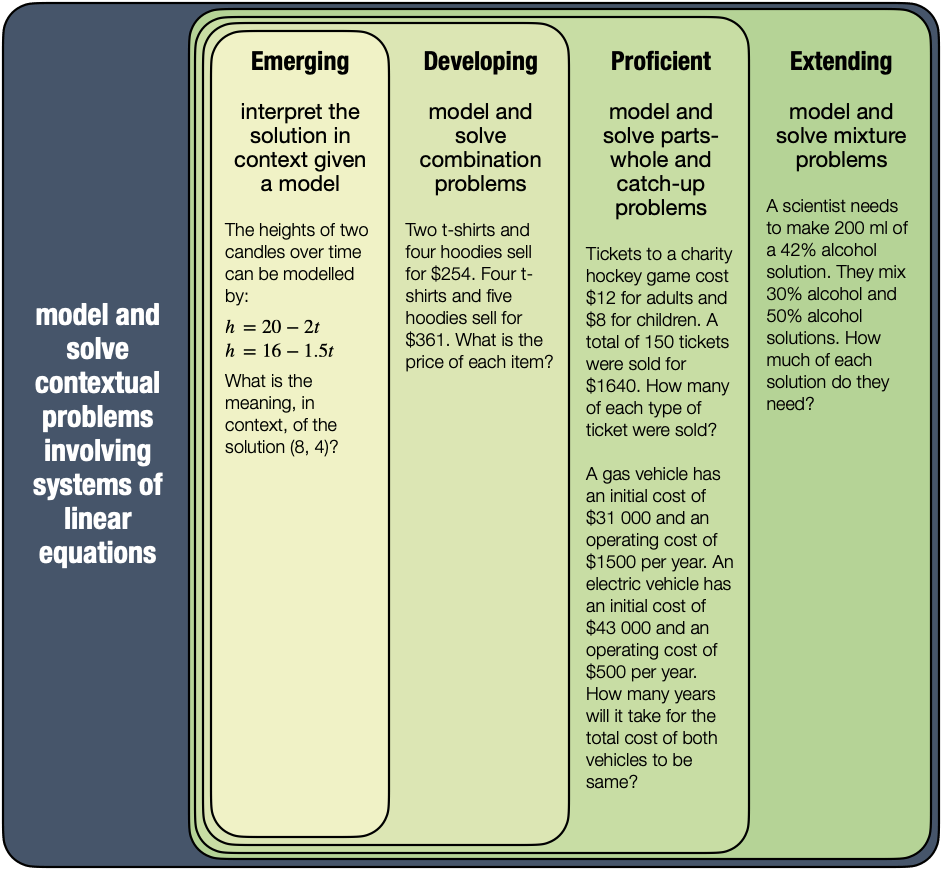

Example: Systems of Linear Equations

In my previous post, I delineated systems of linear equations as solve graphically, solve algebraically, and model and solve contextual problems. Below, I will construct a proficiency scale for each subtopic.

Note that I’ve attached specific questions to my descriptors. My text makes sense to me; it needs to make sense to students. Linear, systems, model, slope-intercept form, general form, substitution, elimination–all of these terms are clear to teachers but may be hazy to the intended audience. (Both logarithmic and sinusoidal appear alongside panendermic and ambifacient in the description of the turbo-encabulator. Substitute nofer trunnions for trigonometric identities in your Math 12 course outline and see if a student calls you on it on Day 1.) The sample questions help students understand the proficiency scales: “Oh yeah, I got this!”

Some of these terms may not make sense to my colleagues. Combination, parts-whole, catch-up, and mixture are my made-up categories of applications of systems. Tees and hoodies are representative of hamburgers and hot dogs or number of wafers and layers of stuf. Adult and child tickets can be swapped out for dimes and quarters or movie sales and rentals. The total cost of a gas vehicle surpassing that of an electric vehicle is similar to the total cost of one gym membership or (dated) cell phone plan overtaking another. Of course, runner, racing car and candle problems fall into the catch-up category, too. Textbooks are chock full o’ mixed nut, alloy, and investment problems. I can’t list every context that students might come across; I can ask “What does this remind you of?”

My descriptors are positive; they describe what students know, not what they don’t know, at each level. They are progressive and additive. Take a moment to look at my solve-by-elimination questions. They are akin to adding and subtracting quarters and quarters, then halves and quarters, then quarters and thirds (or fifths and eighths) in Math 8. Knowing implies knowing

.

Emerging is always the most difficult category for me to describe. My Emerging, like the Ministry’s, includes not yet passing. I would welcome your feedback!

Describing the Extending category can be challenging, too. I’m happy with my solve graphically description and questions. I often lean on create–or create alongside constraints–for this level. I’m leery of verb taxonomies; these pyramids and wheels can oversimplify complexity levels. Go backwards might be better. Open Middle problems populate my Extending columns across all grades and topics.

My solve algebraically… am I assessing content (i.e., systems of linear equations) or competency (i.e., “Explain and justify mathematical ideas and decisions”)? By the way, selecting and defending an approach is behind my choice to not split (👋, Marc!) substitution and elimination. I want to emphasize similarities among methods that derive equivalent systems versus differences between step-by-step procedures. I want to bring in procedural fluency:

Procedural fluency is the ability to apply procedures accurately, efficiently, and flexibly; to transfer procedures to different problems and contexts; to build or modify procedures from other procedures; and to recognize when one strategy or procedure is more appropriate to apply than another.

NCTM

But have I narrowed procedural fluency to one level?

And what about something like:

?

More complicated? Yep. More complex? Probably not.

Note that my model and solve contextual problems is described at all levels. Apply does not guarantee depth of knowledge. Separating problem solving–and listing it last–might suggest that problem solving follows building substitution and elimination methods. It doesn’t. They are interweaved. To see my problem-based approach, watch my Systems of Linear Equations videos from Surrey School’s video series for parents.

Next up, designing assessment items… and constructing proficiency scales has done a lot of the heavy lifting!

Really really like your thinking and describing here. We are in Year 4 of something very similar at our high school. We use Beginning, Developing, Proficient, and Advanced as our levels, but our thinking about them is almost identical. We use an ‘Unmet’ for students that haven’t reached the beginning level, as Beginning is the minimum needed to pass.

I REALLY like your nested rubric visual and have passed it along to my department as well as admin that helped us develop our standards and rubrics; they really like it as well! I really wanted to start digging into our assessments in Spring 2020, and then…well….yeah. This writing out what you are thinking helps me focus my thoughts and I’ve passed this series on to my department to help spur a conversation among us as well.

Thanks for the motivational revitalization with this series, and I’ll be following along!

Thanks, Damion! We formally launched this SBA project in 2019-20. So, yeah, it’s been difficult to maintain momentum. Some BC teachers invent their own category to the left of Emerging — Pre-emerging or Insufficient Evidence. I prefer the latter. I’d love to see what you create with the nested levels in mind. Keep me posted!