- Writing Learning Standards

- Constructing Proficiency Scales

- Designing Assessment Items

- Determining Grades

Designing Assessment Items

There is a sentiment in BC that using tests and quizzes is an outdated assessment practice. However, these are straightforward tools for finding out what students know and can do. So long as students face learning standards like solve systems of linear equations algebraically, test items like “Solve: ;

” are authentic. Rather than eliminate unit tests, teachers can look at them through different lenses; a points-gathering perspective shifts to a data-gathering one. Evidence of student learning can take multiple forms (i.e., products, observations, conversations). In this post I will focus on products, specifically unit tests, in part to push back against the sentiment above.

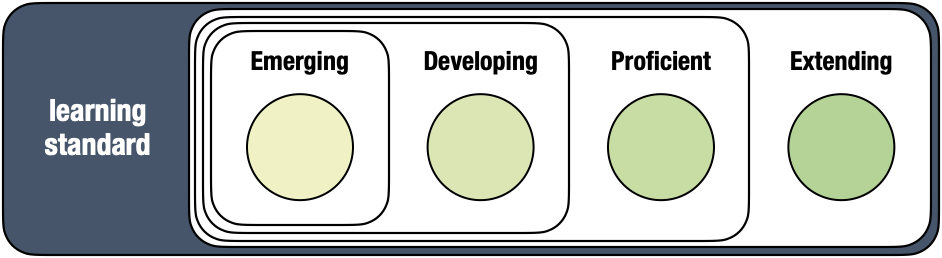

In the previous post, I constructed proficiency scales that describe what students know at each level. These instruments direct the next standards-based assessment practice: designing assessment items. Items can (1) target what students know at each proficiency level or (2) allow for responses at all levels.

Target What Students Know at Each Level

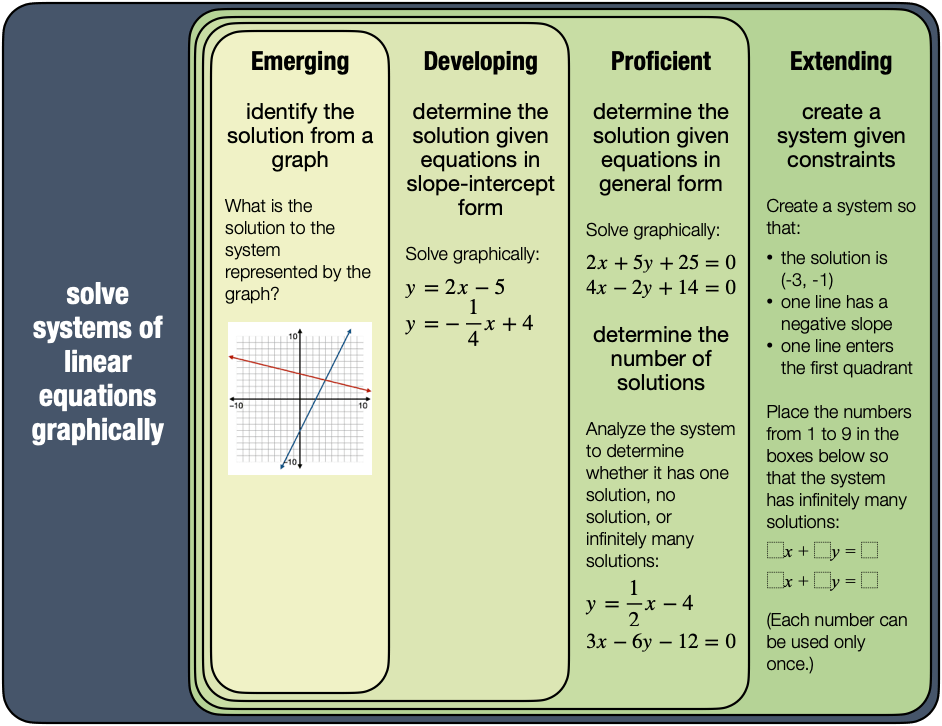

Recall that I attached specific questions to my descriptors to help students understand the proficiency scales:

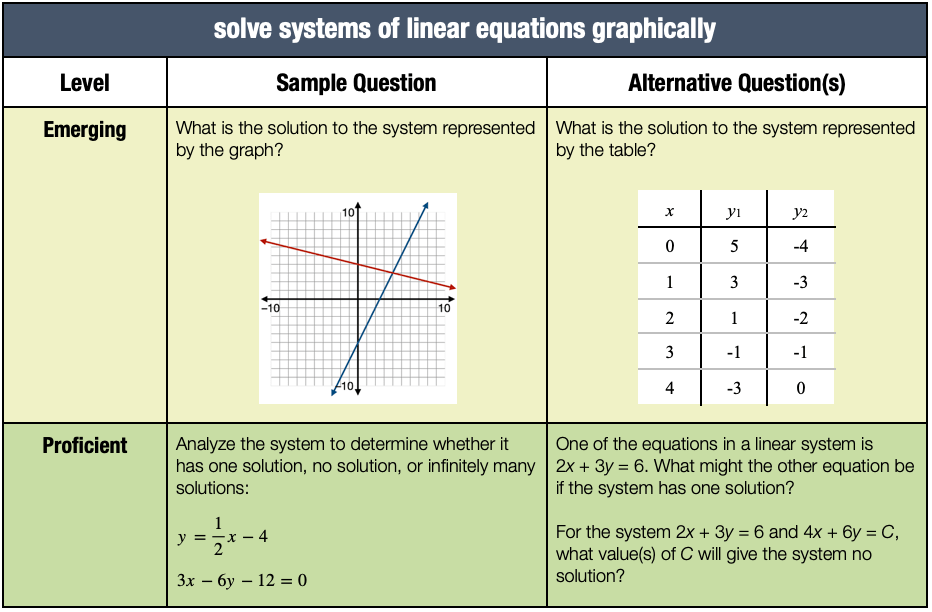

This helps teachers too. Teachers can populate a test with similar questions that reflect a correct amount of complexity at each level of a proficiency scale. Keep in mind that these instruments are intended to be descriptive, not prescriptive. Sticking too close to sample questions can emphasize answer-getting over sense-making. Questions that look different but require the same depth of knowledge are “fair game.” For example:

Prompts like “How do you know?” and “Convince me!” also prioritize conceptual understanding.

Allow For Responses at All Levels

Students can demonstrate what they know through questions that allow for responses at all levels. For example, a single open question such as “How are 23 × 14 and (2x + 3)(x + 4) the same? How are they different?” can elicit evidence of student learning from Emerging to Extending.

Nat Banting’s Menu Math task from the first post in this series is an example of a non-traditional assessment item that provides both access (i.e., a “low-threshold” of building a different quadratic function to satisfy each constraint) and challenge (i.e., a “high-ceiling” of using as few quadratic functions as possible). A student who knows that two negative x-intercepts pairs nicely with vertex in quadrant II but not with never enters quadrant III demonstrates a sophisticated knowledge of quadratic functions. These items blur the line between assessment and instruction.

Note that both of these items combine content (“operations with fractions” and “quadratic functions”) and competencies (i.e., “connect mathematical concepts to one another” and “analyze and apply mathematical ideas using reason”). Assessing content is my focus in this series. Still, I wanted to point out the potential to assess competencies.

Unit Tests

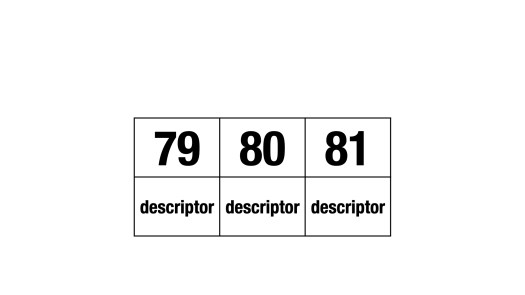

Teachers can arrange these items in two ways: (1) by proficiency level then learning outcome or (2) by learning outcome then proficiency level. A side-by-side comparison of the two arrangements:

Teachers prefer the second layout–the one that places the learning outcome above the proficiency levels. I do too. Evidence of learning relevant to a specific standard is right there on single page–no page flipping is required to reach a decision. An open question can come before or after this set. The proficiency-level-above-learning-outcome layout works if students demonstrate the same proficiency level across different learning outcomes. They don’t. And shouldn’t.

There’s room to include a separate page to assess competency learning standards. Take a moment to think about the following task:

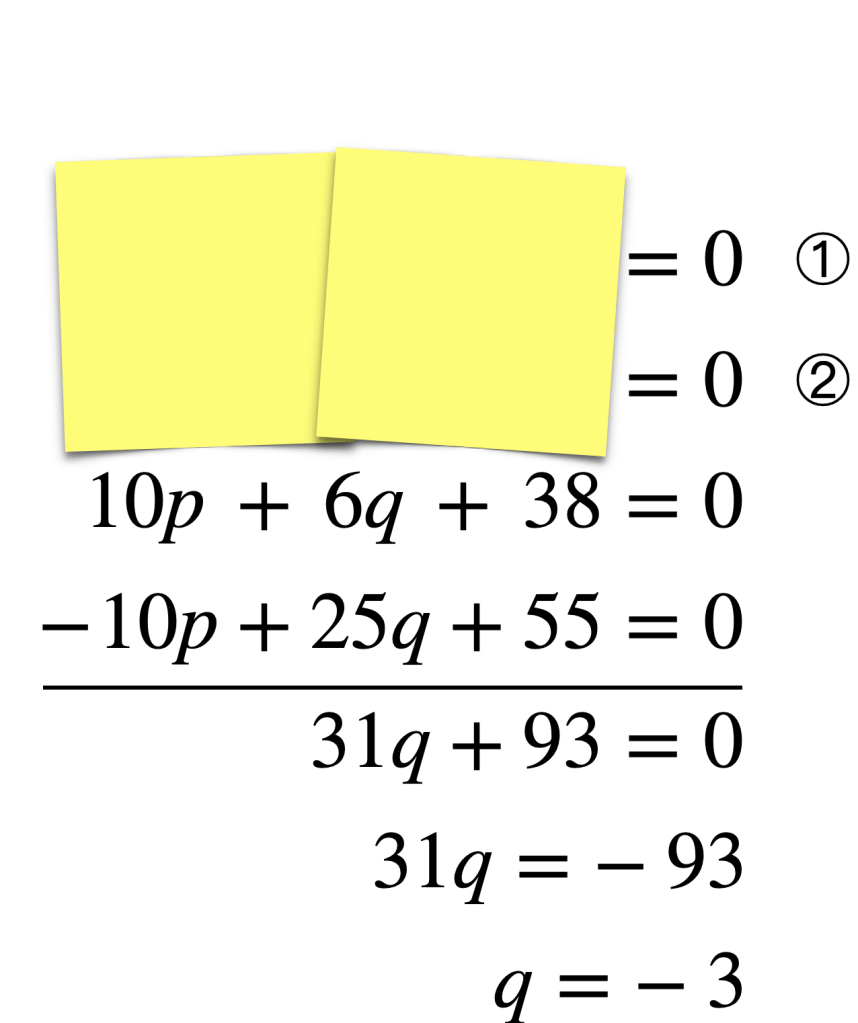

Initially, I designed this task to elicit Extending-level knowledge of solve systems of linear equations algebraically. In order to successfully “go backwards,” a student must recognize what happened: equivalent equations having opposite terms were made. The p-terms could have been built from 5p and 2p. This gives for ① and

for ②. (I’m second-guessing that this targets only Extending;

for ① and

for ② works too.) This task also elicits evidence of students’ capacities to reason and to communicate–two of the curricular competencies.

Teacher Reflections

Many of the teachers who I work with experimented with providing choice. Students self-assessed their level of understanding and decided what evidence to provide. Most of these teachers asked students to demonstrate two proficiency levels (e.g., the most recent level achieved and one higher). Blank responses no longer stood for lost points.

Teachers analyzed their past unit tests. They discovered that progressions from Emerging to Proficient (and sometimes Extending) were already in place. Standards-based assessment just made them visible to students. Some shortened their summative assessments (e.g., Why ask a dozen Developing-level solve-by-elimination questions when two will do?).

The shift from grading based on data, not points, empowered teachers to consider multiple forms (i.e., conversations, observations, products) and sources (e.g., individual interviews, collaborative problem solving, performance tasks) of evidence.

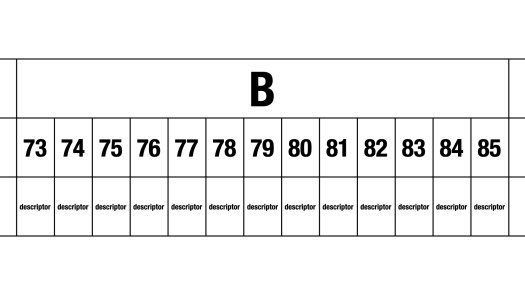

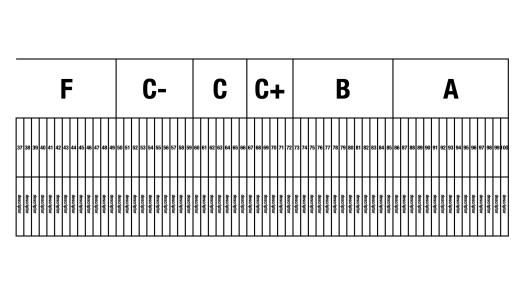

In my next post, I’ll describe the last practice: Determining Grades (and Percentages). Again, a sneak peek:

Update

Here’s a sample unit test populated with questions similar to those from a sample proficiency scale:

Note that Question 18 addresses two content learning standards: (1) solve systems of linear equations graphically and (2) solve systems of linear equations algebraically. Further, this question addresses competency learning standards such as Reasoning (“analyze and apply mathematical ideas using reason”) and Communicating (“explain and justify mathematical ideas and decisions”). The learning standard cells are intentionally left blank; teachers have the flexibility to fill them in for themselves.

Note that Question 19 also addresses competencies. The unfamiliar context can make it a problematic problem that calls for (Problem) Solving. “Which window has been given an incorrect price?” is a novel prompt that requires Reasoning.

These two questions also set up the possibility of a unit test containing a collaborative portion.

[E]valuation is a double edged sword. When we evaluate our students, they evaluate us–for what we choose to evaluate tells our students what we value. So, if we value perseverance, we need to find a way to evaluate it. If we value collaboration, we need to find a way to evaluate it. No amount of talking about how important and valuable these competencies are is going to convince students about our conviction around them if we choose only to evaluate their abilities to individually answer closed skill math questions. We need to put our evaluation where our mouth is. We need to start evaluating what we value.

Liljedahl, P. (2021). Building thinking classrooms in mathematics, grades K-12: 14 teaching practices for enhancing learning. Corwin.

Peter,

I discovered this site and information quite by accident while searching desperately for help understanding the Proficiency Scale and how it works. My district has done a majorly sloppy job of teaching this stuff and has “rammed through” this stuff on us. As this stuff is coming for Gr. 9 teachers, me especially, I had to scramble and research tons of sites just to try to get a minimum handle on it. As you can imagine, moving from traditional marking with percentages to this has resulted in LOTS of questions and a huge amount of stress and anxiety.

After reading your stuff, even though I’m a S.S. teacher, and watching Marc Garneau’s video ( Assessment #2 ), I feel like someone has just lifted an enormous weight off my shoulders!!! THANK YOU!!! and well done to both of you. I still have some questions as I work through this stuff but this has really helped.